AI Tool Performs as Good as Experienced Radiologists in Interpreting Chest X-rays

|

By MedImaging International staff writers Posted on 23 Dec 2019 |

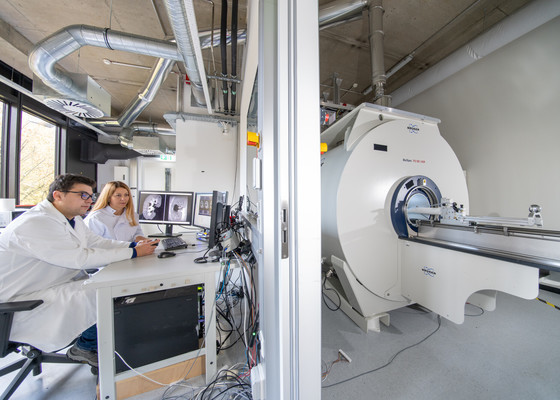

Illustration

A team of researchers from Google Health (Palo Alto, CA, USA) have developed a sophisticated type of artificial intelligence (AI) that can detect clinically meaningful chest X-ray findings as effectively as experienced radiologists. Their findings, based on a type of AI called deep learning, could provide a valuable resource for the future development of AI chest radiography models.

Chest radiography, or X-ray, one of the most common imaging exams worldwide, is performed to help diagnose the source of symptoms like cough, fever and pain. Despite its popularity, the exam has limitations. Deep learning, a sophisticated type of AI in which the computer can be trained to recognize subtle patterns, has the potential to improve chest X-ray interpretation, but it too has limitations. For instance, results derived from one group of patients cannot always be generalized to the population at large.

Researchers at Google Health developed deep learning models for chest X-ray interpretation that overcome some of these limitations. They used two large datasets to develop, train and test the models. The first dataset consisted of more than 750,000 images from five hospitals in India, while the second set included 112,120 images made publicly available by the National Institutes of Health (NIH). A panel of radiologists convened to create the reference standards for certain abnormalities visible on chest X-rays used to train the models.

Tests of the deep learning models showed that they performed on par with radiologists in detecting four findings on frontal chest X-rays, including fractures, nodules or masses, opacity (an abnormal appearance on X-rays often indicative of disease) and pneumothorax (the presence of air or gas in the cavity between the lungs and the chest wall). Radiologist adjudication led to increased expert consensus of the labels used for model tuning and performance evaluation. The overall consensus increased from just over 41% after the initial read to more than almost 97% after adjudication.

"Chest X-ray interpretation is often a qualitative assessment, which is problematic from deep learning standpoint," said Daniel Tse, M.D., product manager at Google Health. "By using a large, diverse set of chest X-ray data and panel-based adjudication, we were able to produce more reliable evaluation for the models."

Related Links:

Google Health

Chest radiography, or X-ray, one of the most common imaging exams worldwide, is performed to help diagnose the source of symptoms like cough, fever and pain. Despite its popularity, the exam has limitations. Deep learning, a sophisticated type of AI in which the computer can be trained to recognize subtle patterns, has the potential to improve chest X-ray interpretation, but it too has limitations. For instance, results derived from one group of patients cannot always be generalized to the population at large.

Researchers at Google Health developed deep learning models for chest X-ray interpretation that overcome some of these limitations. They used two large datasets to develop, train and test the models. The first dataset consisted of more than 750,000 images from five hospitals in India, while the second set included 112,120 images made publicly available by the National Institutes of Health (NIH). A panel of radiologists convened to create the reference standards for certain abnormalities visible on chest X-rays used to train the models.

Tests of the deep learning models showed that they performed on par with radiologists in detecting four findings on frontal chest X-rays, including fractures, nodules or masses, opacity (an abnormal appearance on X-rays often indicative of disease) and pneumothorax (the presence of air or gas in the cavity between the lungs and the chest wall). Radiologist adjudication led to increased expert consensus of the labels used for model tuning and performance evaluation. The overall consensus increased from just over 41% after the initial read to more than almost 97% after adjudication.

"Chest X-ray interpretation is often a qualitative assessment, which is problematic from deep learning standpoint," said Daniel Tse, M.D., product manager at Google Health. "By using a large, diverse set of chest X-ray data and panel-based adjudication, we were able to produce more reliable evaluation for the models."

Related Links:

Google Health

Latest Industry News News

- Bayer and Google Partner on New AI Product for Radiologists

- Samsung and Bracco Enter Into New Diagnostic Ultrasound Technology Agreement

- IBA Acquires Radcal to Expand Medical Imaging Quality Assurance Offering

- International Societies Suggest Key Considerations for AI Radiology Tools

- Samsung's X-Ray Devices to Be Powered by Lunit AI Solutions for Advanced Chest Screening

- Canon Medical and Olympus Collaborate on Endoscopic Ultrasound Systems

- GE HealthCare Acquires AI Imaging Analysis Company MIM Software

- First Ever International Criteria Lays Foundation for Improved Diagnostic Imaging of Brain Tumors

- RSNA Unveils 10 Most Cited Radiology Studies of 2023

- RSNA 2023 Technical Exhibits to Offer Innovations in AI, 3D Printing and More

- AI Medical Imaging Products to Increase Five-Fold by 2035, Finds Study

- RSNA 2023 Technical Exhibits to Highlight Latest Medical Imaging Innovations

- AI-Powered Technologies to Aid Interpretation of X-Ray and MRI Images for Improved Disease Diagnosis

- Hologic and Bayer Partner to Improve Mammography Imaging

- Global Fixed and Mobile C-Arms Market Driven by Increasing Surgical Procedures

- Global Contrast Enhanced Ultrasound Market Driven by Demand for Early Detection of Chronic Diseases

Channels

Radiography

view channel

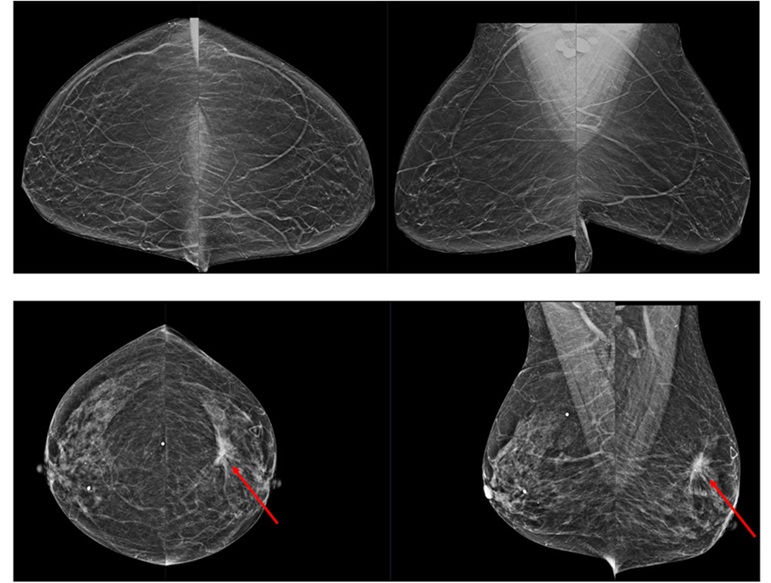

Novel Breast Imaging System Proves As Effective As Mammography

Breast cancer remains the most frequently diagnosed cancer among women. It is projected that one in eight women will be diagnosed with breast cancer during her lifetime, and one in 42 women who turn 50... Read more

AI Assistance Improves Breast-Cancer Screening by Reducing False Positives

Radiologists typically detect one case of cancer for every 200 mammograms reviewed. However, these evaluations often result in false positives, leading to unnecessary patient recalls for additional testing,... Read moreMRI

view channel

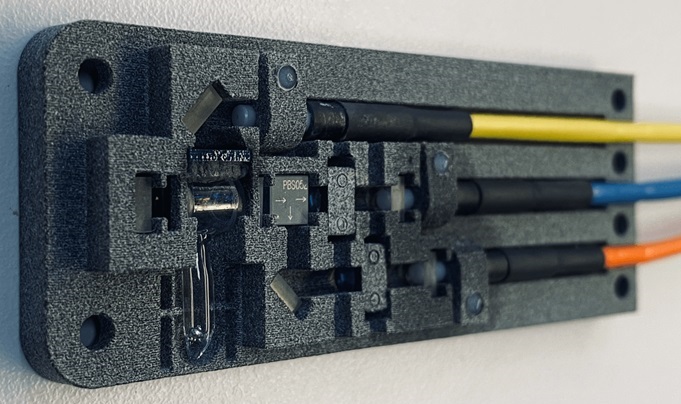

World's First Sensor Detects Errors in MRI Scans Using Laser Light and Gas

MRI scanners are daily tools for doctors and healthcare professionals, providing unparalleled 3D imaging of the brain, vital organs, and soft tissues, far surpassing other imaging technologies in quality.... Read more

Diamond Dust Could Offer New Contrast Agent Option for Future MRI Scans

Gadolinium, a heavy metal used for over three decades as a contrast agent in medical imaging, enhances the clarity of MRI scans by highlighting affected areas. Despite its utility, gadolinium not only... Read more.jpg)

Combining MRI with PSA Testing Improves Clinical Outcomes for Prostate Cancer Patients

Prostate cancer is a leading health concern globally, consistently being one of the most common types of cancer among men and a major cause of cancer-related deaths. In the United States, it is the most... Read moreUltrasound

view channel

Largest Model Trained On Echocardiography Images Assesses Heart Structure and Function

Foundation models represent an exciting frontier in generative artificial intelligence (AI), yet many lack the specialized medical data needed to make them applicable in healthcare settings.... Read more.jpg)

Groundbreaking Technology Enables Precise, Automatic Measurement of Peripheral Blood Vessels

The current standard of care of using angiographic information is often inadequate for accurately assessing vessel size in the estimated 20 million people in the U.S. who suffer from peripheral vascular disease.... Read more

Deep Learning Advances Super-Resolution Ultrasound Imaging

Ultrasound localization microscopy (ULM) is an advanced imaging technique that offers high-resolution visualization of microvascular structures. It employs microbubbles, FDA-approved contrast agents, injected... Read more

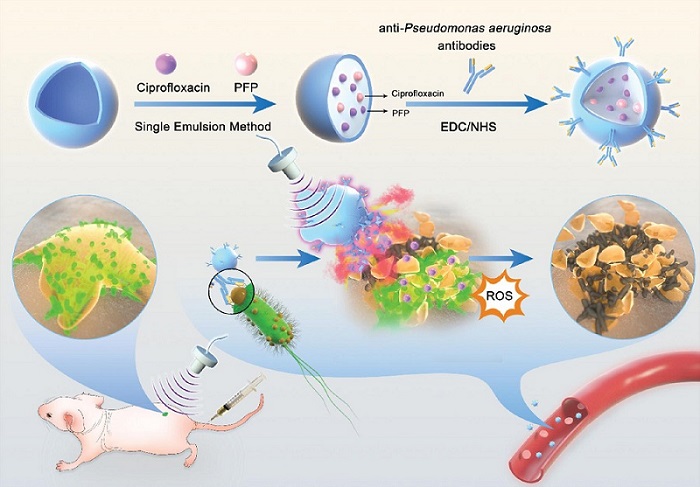

Novel Ultrasound-Launched Targeted Nanoparticle Eliminates Biofilm and Bacterial Infection

Biofilms, formed by bacteria aggregating into dense communities for protection against harsh environmental conditions, are a significant contributor to various infectious diseases. Biofilms frequently... Read moreNuclear Medicine

view channel

New Imaging Technique Monitors Inflammation Disorders without Radiation Exposure

Imaging inflammation using traditional radiological techniques presents significant challenges, including radiation exposure, poor image quality, high costs, and invasive procedures. Now, new contrast... Read more

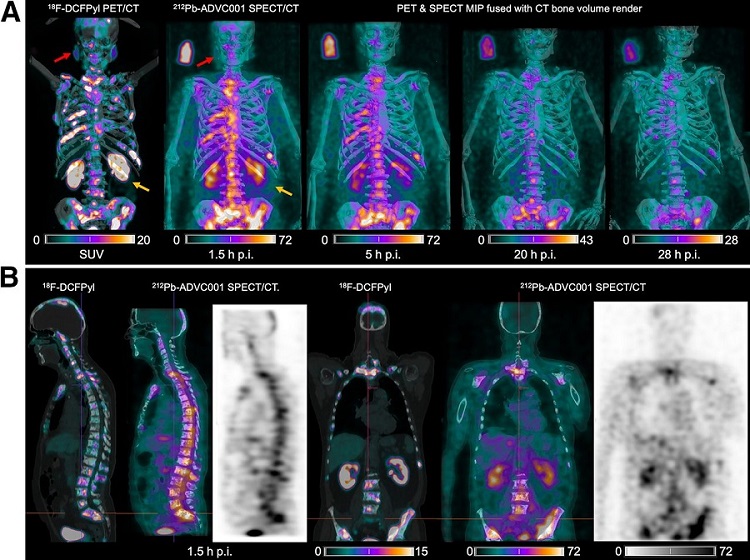

New SPECT/CT Technique Could Change Imaging Practices and Increase Patient Access

The development of lead-212 (212Pb)-PSMA–based targeted alpha therapy (TAT) is garnering significant interest in treating patients with metastatic castration-resistant prostate cancer. The imaging of 212Pb,... Read moreNew Radiotheranostic System Detects and Treats Ovarian Cancer Noninvasively

Ovarian cancer is the most lethal gynecological cancer, with less than a 30% five-year survival rate for those diagnosed in late stages. Despite surgery and platinum-based chemotherapy being the standard... Read more

AI System Automatically and Reliably Detects Cardiac Amyloidosis Using Scintigraphy Imaging

Cardiac amyloidosis, a condition characterized by the buildup of abnormal protein deposits (amyloids) in the heart muscle, severely affects heart function and can lead to heart failure or death without... Read moreGeneral/Advanced Imaging

view channel

PET Scans Reveal Hidden Inflammation in Multiple Sclerosis Patients

A key challenge for clinicians treating patients with multiple sclerosis (MS) is that after a certain amount of time, they continue to worsen even though their MRIs show no change. A new study has now... Read more

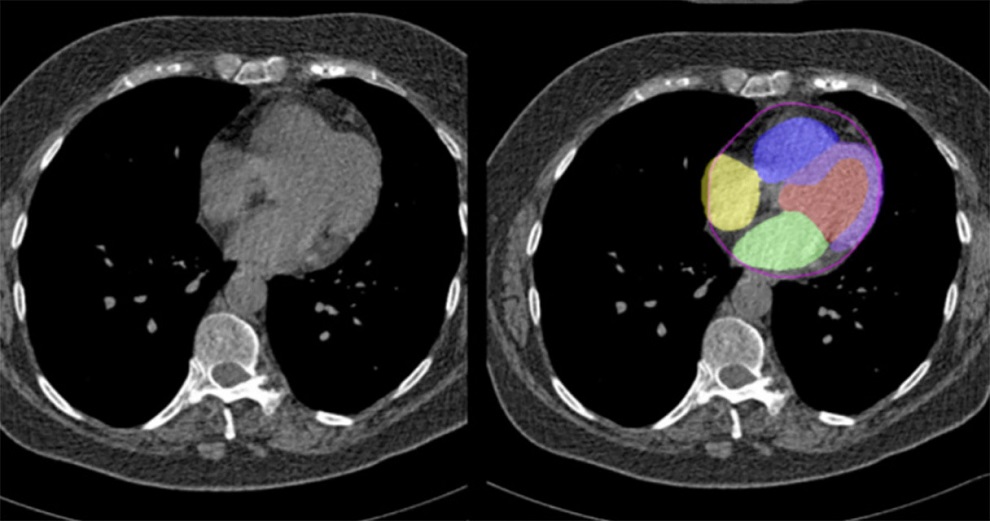

Artificial Intelligence Evaluates Cardiovascular Risk from CT Scans

Chest computed tomography (CT) is a common diagnostic tool, with approximately 15 million scans conducted each year in the United States, though many are underutilized or not fully explored.... Read more

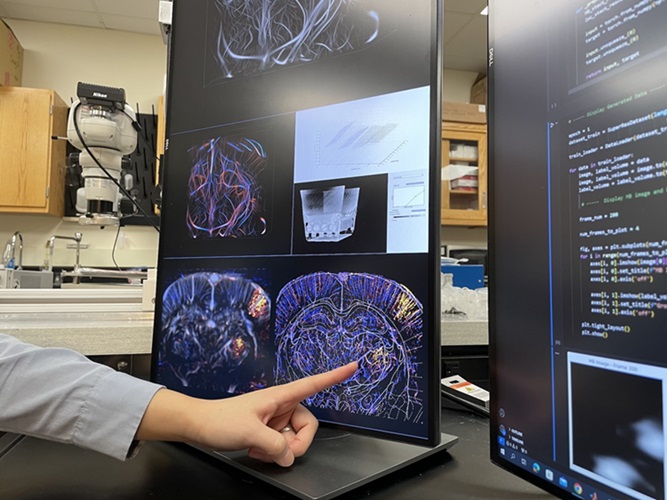

New AI Method Captures Uncertainty in Medical Images

In the field of biomedicine, segmentation is the process of annotating pixels from an important structure in medical images, such as organs or cells. Artificial Intelligence (AI) models are utilized to... Read more.jpg)

CT Coronary Angiography Reduces Need for Invasive Tests to Diagnose Coronary Artery Disease

Coronary artery disease (CAD), one of the leading causes of death worldwide, involves the narrowing of coronary arteries due to atherosclerosis, resulting in insufficient blood flow to the heart muscle.... Read moreImaging IT

view channel

New Google Cloud Medical Imaging Suite Makes Imaging Healthcare Data More Accessible

Medical imaging is a critical tool used to diagnose patients, and there are billions of medical images scanned globally each year. Imaging data accounts for about 90% of all healthcare data1 and, until... Read more