Augmented-Reality Enhances Surgical Navigation Technology

|

By MedImaging International staff writers Posted on 25 Jan 2017 |

Image: Augmented-reality surgical navigation technology enhances X-ray guidance (Photo courtesy of Philips Healthcare).

A combination of three-dimensional (3D) X-ray and advanced optics provides surgeons with augmented-reality imaging during spine, cranial, and trauma surgical procedures.

The Royal Philips augmented-reality surgical navigation technology is designed to help surgeons perform image-guided open and minimally invasive spine surgery in a hybrid operating room (OR). Due to the inherently reduced visibility of the spine and other structures during such procedures, surgeons rely on real-time imaging and navigation solutions in order to guide their surgical tools and implants. The same is true for minimally invasive cranial surgery and surgery on complex trauma fractures.

The new Philips technology adds additional capabilities to the company’s low-dose X-ray system, using high-resolution optical cameras mounted on the X-ray flat panel detector (FPD) to image the surface of the patient. It then combines the external view captured by the cameras and the internal 3D view of acquired by the X-ray system to construct an augmented-reality view of the patient’s external and internal anatomy, improving procedural planning, surgical tool navigation, and implant accuracy, as well as reducing procedure times.

The first pre-clinical study on the technology was undertaken as a collaboration between Philips, Karolinska University Hospital, Cincinnati Children's Hospital Medical Center, and eight other medical centers. The results showed the technology to be significantly better with respect to overall accuracy (85%), when compared to pedicle screw placement without the technology (64%). The study was published in the November 2016 issue of Spine.

“This new technology allows us to intraoperatively make a high-resolution 3D image of the patient’s spine, plan the optimal device path, and subsequently place pedicle screws using the system’s fully-automatic augmented-reality navigation,” said study co-author Stefan Skúlason, MD, of Landspitali University Hospital (Reykjavik, Iceland). “We can also check the overall result in 3D in the OR without the need to move the patient to a CT scanner. And all this can be done without any radiation exposure to the surgeon and with minimal dose to the patient.”

The Royal Philips augmented-reality surgical navigation technology is designed to help surgeons perform image-guided open and minimally invasive spine surgery in a hybrid operating room (OR). Due to the inherently reduced visibility of the spine and other structures during such procedures, surgeons rely on real-time imaging and navigation solutions in order to guide their surgical tools and implants. The same is true for minimally invasive cranial surgery and surgery on complex trauma fractures.

The new Philips technology adds additional capabilities to the company’s low-dose X-ray system, using high-resolution optical cameras mounted on the X-ray flat panel detector (FPD) to image the surface of the patient. It then combines the external view captured by the cameras and the internal 3D view of acquired by the X-ray system to construct an augmented-reality view of the patient’s external and internal anatomy, improving procedural planning, surgical tool navigation, and implant accuracy, as well as reducing procedure times.

The first pre-clinical study on the technology was undertaken as a collaboration between Philips, Karolinska University Hospital, Cincinnati Children's Hospital Medical Center, and eight other medical centers. The results showed the technology to be significantly better with respect to overall accuracy (85%), when compared to pedicle screw placement without the technology (64%). The study was published in the November 2016 issue of Spine.

“This new technology allows us to intraoperatively make a high-resolution 3D image of the patient’s spine, plan the optimal device path, and subsequently place pedicle screws using the system’s fully-automatic augmented-reality navigation,” said study co-author Stefan Skúlason, MD, of Landspitali University Hospital (Reykjavik, Iceland). “We can also check the overall result in 3D in the OR without the need to move the patient to a CT scanner. And all this can be done without any radiation exposure to the surgeon and with minimal dose to the patient.”

Latest Radiography News

- Novel Breast Imaging System Proves As Effective As Mammography

- AI Assistance Improves Breast-Cancer Screening by Reducing False Positives

- AI Could Boost Clinical Adoption of Chest DDR

- 3D Mammography Almost Halves Breast Cancer Incidence between Two Screening Tests

- AI Model Predicts 5-Year Breast Cancer Risk from Mammograms

- Deep Learning Framework Detects Fractures in X-Ray Images With 99% Accuracy

- Direct AI-Based Medical X-Ray Imaging System a Paradigm-Shift from Conventional DR and CT

- Chest X-Ray AI Solution Automatically Identifies, Categorizes and Highlights Suspicious Areas

- AI Diagnoses Wrist Fractures As Well As Radiologists

- Annual Mammography Beginning At 40 Cuts Breast Cancer Mortality By 42%

- 3D Human GPS Powered By Light Paves Way for Radiation-Free Minimally-Invasive Surgery

- Novel AI Technology to Revolutionize Cancer Detection in Dense Breasts

- AI Solution Provides Radiologists with 'Second Pair' Of Eyes to Detect Breast Cancers

- AI Helps General Radiologists Achieve Specialist-Level Performance in Interpreting Mammograms

- Novel Imaging Technique Could Transform Breast Cancer Detection

- Computer Program Combines AI and Heat-Imaging Technology for Early Breast Cancer Detection

Channels

MRI

view channel

Diamond Dust Could Offer New Contrast Agent Option for Future MRI Scans

Gadolinium, a heavy metal used for over three decades as a contrast agent in medical imaging, enhances the clarity of MRI scans by highlighting affected areas. Despite its utility, gadolinium not only... Read more.jpg)

Combining MRI with PSA Testing Improves Clinical Outcomes for Prostate Cancer Patients

Prostate cancer is a leading health concern globally, consistently being one of the most common types of cancer among men and a major cause of cancer-related deaths. In the United States, it is the most... Read more

PET/MRI Improves Diagnostic Accuracy for Prostate Cancer Patients

The Prostate Imaging Reporting and Data System (PI-RADS) is a five-point scale to assess potential prostate cancer in MR images. PI-RADS category 3 which offers an unclear suggestion of clinically significant... Read more

Next Generation MR-Guided Focused Ultrasound Ushers In Future of Incisionless Neurosurgery

Essential tremor, often called familial, idiopathic, or benign tremor, leads to uncontrollable shaking that significantly affects a person’s life. When traditional medications do not alleviate symptoms,... Read moreUltrasound

view channel.jpg)

Groundbreaking Technology Enables Precise, Automatic Measurement of Peripheral Blood Vessels

The current standard of care of using angiographic information is often inadequate for accurately assessing vessel size in the estimated 20 million people in the U.S. who suffer from peripheral vascular disease.... Read more

Deep Learning Advances Super-Resolution Ultrasound Imaging

Ultrasound localization microscopy (ULM) is an advanced imaging technique that offers high-resolution visualization of microvascular structures. It employs microbubbles, FDA-approved contrast agents, injected... Read more

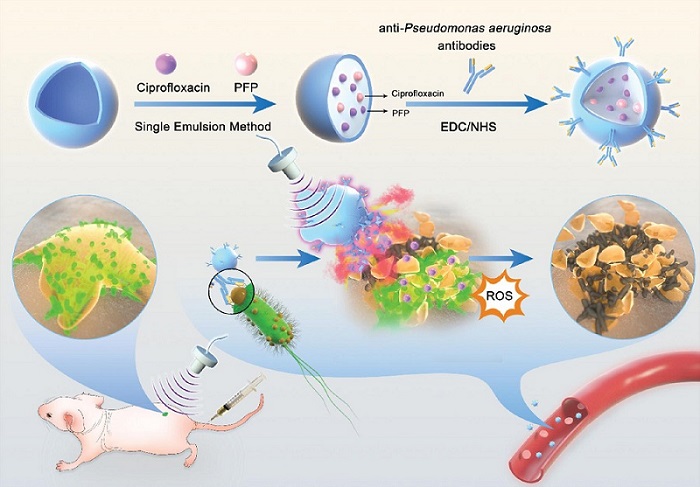

Novel Ultrasound-Launched Targeted Nanoparticle Eliminates Biofilm and Bacterial Infection

Biofilms, formed by bacteria aggregating into dense communities for protection against harsh environmental conditions, are a significant contributor to various infectious diseases. Biofilms frequently... Read moreNuclear Medicine

view channel

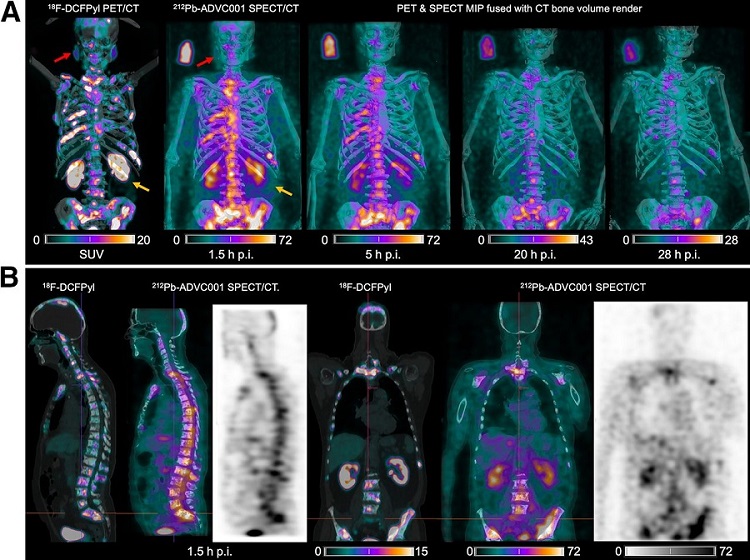

New SPECT/CT Technique Could Change Imaging Practices and Increase Patient Access

The development of lead-212 (212Pb)-PSMA–based targeted alpha therapy (TAT) is garnering significant interest in treating patients with metastatic castration-resistant prostate cancer. The imaging of 212Pb,... Read moreNew Radiotheranostic System Detects and Treats Ovarian Cancer Noninvasively

Ovarian cancer is the most lethal gynecological cancer, with less than a 30% five-year survival rate for those diagnosed in late stages. Despite surgery and platinum-based chemotherapy being the standard... Read more

AI System Automatically and Reliably Detects Cardiac Amyloidosis Using Scintigraphy Imaging

Cardiac amyloidosis, a condition characterized by the buildup of abnormal protein deposits (amyloids) in the heart muscle, severely affects heart function and can lead to heart failure or death without... Read moreGeneral/Advanced Imaging

view channel

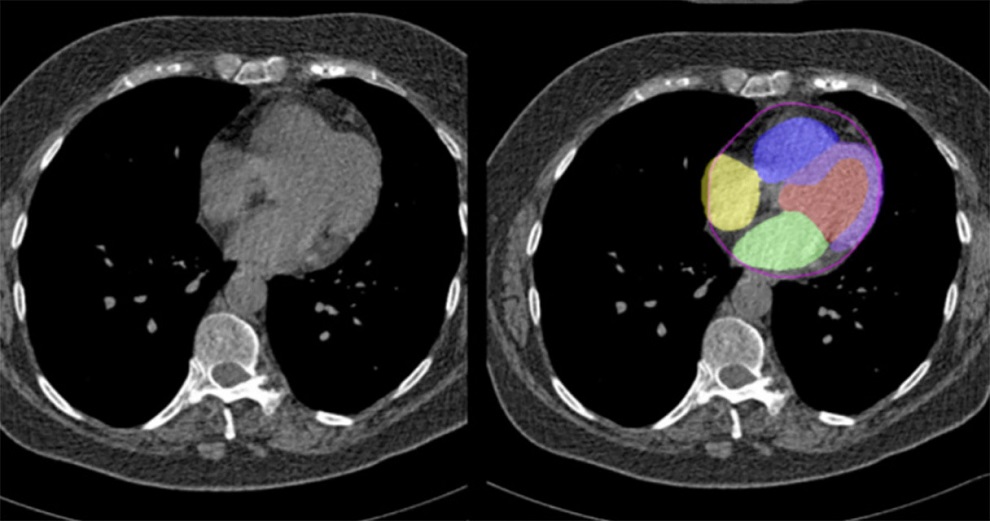

Artificial Intelligence Evaluates Cardiovascular Risk from CT Scans

Chest computed tomography (CT) is a common diagnostic tool, with approximately 15 million scans conducted each year in the United States, though many are underutilized or not fully explored.... Read more

New AI Method Captures Uncertainty in Medical Images

In the field of biomedicine, segmentation is the process of annotating pixels from an important structure in medical images, such as organs or cells. Artificial Intelligence (AI) models are utilized to... Read more.jpg)

CT Coronary Angiography Reduces Need for Invasive Tests to Diagnose Coronary Artery Disease

Coronary artery disease (CAD), one of the leading causes of death worldwide, involves the narrowing of coronary arteries due to atherosclerosis, resulting in insufficient blood flow to the heart muscle.... Read more

Novel Blood Test Could Reduce Need for PET Imaging of Patients with Alzheimer’s

Alzheimer's disease (AD), a condition marked by cognitive decline and the presence of beta-amyloid (Aβ) plaques and neurofibrillary tangles in the brain, poses diagnostic challenges. Amyloid positron emission... Read moreImaging IT

view channel

New Google Cloud Medical Imaging Suite Makes Imaging Healthcare Data More Accessible

Medical imaging is a critical tool used to diagnose patients, and there are billions of medical images scanned globally each year. Imaging data accounts for about 90% of all healthcare data1 and, until... Read more

Global AI in Medical Diagnostics Market to Be Driven by Demand for Image Recognition in Radiology

The global artificial intelligence (AI) in medical diagnostics market is expanding with early disease detection being one of its key applications and image recognition becoming a compelling consumer proposition... Read moreIndustry News

view channel

Bayer and Google Partner on New AI Product for Radiologists

Medical imaging data comprises around 90% of all healthcare data, and it is a highly complex and rich clinical data modality and serves as a vital tool for diagnosing patients. Each year, billions of medical... Read more