Interactive AI Tool Supports Explainable Lung Nodule Assessment

Posted on 16 May 2026

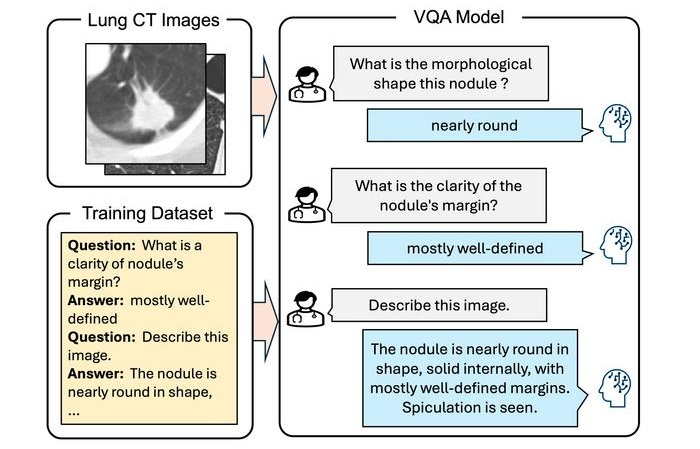

Lung cancer is a leading cause of cancer mortality, and timely characterization of pulmonary nodules on chest computed tomography (CT) is essential for directing care. Interpreting nodule morphology demands expert judgment and can vary across readers. Conventional artificial intelligence has improved detection but often yields opaque, binary outputs that are hard to trust and apply. To help address this challenge, researchers have developed a vision–language model that generates clinically oriented descriptions from CT images through interactive visual question answering.

Developed at Meijo University (Nagoya, Japan), the diagnostic support framework uses visual question answering (VQA) to enable clinicians to pose targeted queries about nodule appearance and receive descriptive findings. The approach is intended to mirror the language of physician-authored reports to improve usability in clinical workflows. The work was published in the International Journal of Computer Assisted Radiology and Surgery.

The team built a training corpus from the Lung Image Database Consortium and Image Database Resource Initiative (LIDC-IDRI). Structured annotations describing sphericity, margin, texture, lobulation, spiculation, and calcification were converted into natural language statements. These were paired with corresponding clinical questions to link images, questions, and reference findings. The vision–language model was then fine-tuned to answer physician-driven prompts using both visual input and the curated text.

In testing, the system generated clinically meaningful and linguistically natural descriptions that aligned with reference findings. Quantitative evaluation reported a CIDEr score of 3.896, indicating strong agreement and contextual relevance. The model also maintained consistency across key morphological attributes, supporting reliable interpretation of pulmonary nodules. By enabling targeted questions about shape or internal structure, the framework offers detailed and explainable outputs rather than simple classifications.

The researchers note that presenting results in a question-and-answer format can improve transparency into the reasoning process. This interactive capability can support report writing, enhance training for medical professionals, and reduce inconsistencies in diagnosis. The authors suggest the approach may help standardize practice in the near term and aid integration of advanced diagnostic tools into clinical workflows over time.

“Conventional AI diagnostic support methods lacked explainability because they mainly focused on classifying lesions as benign or malignant from medical images. This made it difficult for clinicians to interpret and utilize the results. Thus, our goal was to generate findings similar to those written by physicians to improve the usability and acceptance of AI outputs,” said Ms. Maiko Nagao, graduate student, Graduate School of Science and Technology, Meijo University, Japan.

Related Links

Meijo University